News Detail

Less animal testing thanks to machine learning

April 26, 2022 |

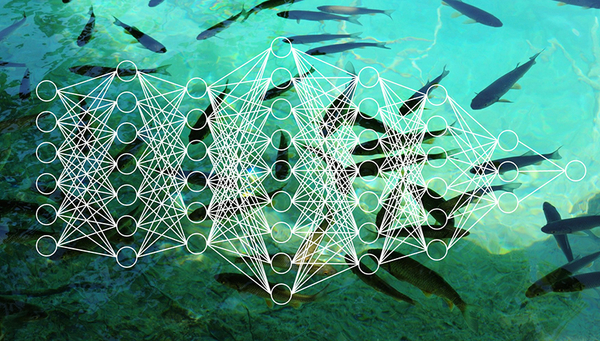

Countless chemical substances, including fertilisers and pesticides but also pharmaceutical substances and industrial products, leak into groundwater, lakes and rivers. “We want to know what the impact of these chemicals is on aquatic species, and whether they are toxic or not,” says Marco Baity-Jesi, Head of the Eawag Data Science Group. To do this, fish are typically exposed to a substance at different concentrations in different tanks. These animal experiments, which are fatal for the fish, are ethically controversial and also costly. “Instead of conducting such experiments, we want to predict the effect of chemicals on fish using machine learning methods,” explains Baity-Jesi, a physicist. For this project, his team is collaborating with the group lead by Kristin Schirmer, which is developing experimental alternatives to animal tests with fish using fish cell lines.

For the source material, the researchers accessed the database of the US Environmental Protection Agency. This contains the results of experiments with almost 2,200 chemicals tested on 345 different fish species. In all, the researchers were able to use around 20,000 entries. “It’s not a huge dataset, but it’s enough for our purposes,” says the physicist. After analysing and setting up the data, his team wrote the software for various machine learning models suitable for the prepared input. The researchers used most of the data as training material to feed their models, but kept a small portion as a test dataset so they could check how accurately the machine’s calculated predictions matched the experimental results.

Precise predictions

The researchers have now published their results in an initial study. Based on the learned patterns, the models can predict in a second whether a chemical is more or less toxic for a fish species. The computer’s predictions proved to be more than 90-per cent correct. “The model predictions can’t be completely perfect,” explains Baity-Jesi. “Because the experiments aren’t either.” For example, one often finds conflicting data on experiments with a particular substance and fish species. “If you perform an experiment with the same chemical twice, it may end up in two different categories of toxicity,” the researcher says. “But the models can’t be better than the data they learn from.”

The authors of the paper were surprised to find that a new model, which has been successful in human toxicology, did not produce results that were more accurate than the standard models used. “Overall, our outcome looks very good,” comments Baity-Jesi. “But although our models are online and anyone can use them, many more tests are needed before the machine can be deployed as an alternative to animal testing and chemical regulation.”

From fish to invertebrates and algae

For instance, the current results represent only a fraction of all possible chemicals and organisms to test. To expand the scope of the models, a broader range of data is needed both for training the algorithms and for testing them. So next, besides fish, the research group wants to include invertebrates and algae in its work. In addition, data from experiments with fish cell lines should also support the models in the future. The success of this work by Schirmer’s group was demonstrated last year when the OECD recognised the fish cell line assay developed at Eawag as a new guideline in the approval procedures of chemicals.

“The more data you present to the models, the better they get,” explains Baity-Jesi. Moreover, care must be taken to ensure that the models are trained where they will be used. If chemicals or organisms deviate too much from those tested in the experiments, the accuracy of the machine learning also drops; therefore limits to its reliability must be identified.

In the future, computer predictions will probably still need to be confirmed by testing in some cases. “Therefore, it is unlikely that machine learning alone will soon be able to completely replace animal testing, but it can certainly make it possible to reduce it tremendously,” the researchers write. And even at the current stage of development, Baity-Jesi sees an important use for the computer algorithms: “For example, if you only have resources for five experiments but thousands of chemicals and animal species, our models can tell you which substances and animals should be tested first.” In this way, machine learning helps prioritise environmental research.

Cover picture: Trout are often used in experiments. Machine learning could be an alternative to fish testing. (Image: istock, edited by Eawag)

Publication

Cooperations

- Eawag

- ETH Zurich

- Sapienza University of Rome, Italy

- Swiss Federal Institute for Forest, Snow, and Landscape Research WSL

- EPFL

Funding

This project was funded by the SDSC grant “Enhancing Toxicological Testing through Machine Learning” (project No C20-04).