Department Systems Analysis, Integrated Assessment and Modelling

Metamorphic Analysis of Machine Learning and Conceptual Hydrological Models

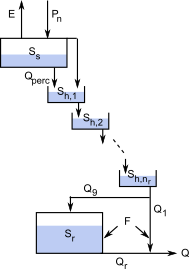

Prediction of the reponse of a hydrologial system to modified driving forces beyond their typical pattern during model calibration is of high importance, e.g. for the prediction of climate change impacts. Metamorphic testing is a methodology to define input changes for which at least qualitatively the expected response can be described even in the absence of precise knowledge and to test models for “consistent” behavior with these responses. We investigate a set of machine learning and conceptual hydrological models in this framework. As we are quite uncertain about the true response, we call our approach ``metamorphic analysis'' rather than metamorphic testing. The goal is to investigate whether we can learn from differences among predictions from the variety of models. In concrete terms, we investigate the differences in predictions for a uniform 10% increase in precipitation and for a uniform increase in temperture by 1 degree centigrade. In addition to gaining insight about the magnitude of prediction uncertainty, we expect that this approach may uncover situations in which the machine learning models may extrapolate incorrectly from other catchments and also situations in which they may be able to identify incorrect of incomplete process descriptions in conceptual hydrological models. This could inspire the improvement of machine learning models, e.g. by adapting the considered set of catchment attributes, but also the improvement of conceptual models by adapting process formulations or adding processes that had not been considered. This approach and some learning experiences are demonstrated by analyzing selected features of the simulations and predictions for selected basins of the US CAMELS data set.

References

Metamorphic Analysis of Machine Learning and Conceptual Hydrological Models by Comparing Out-of-domain Predictions, in preparation 2022.

Funding

Eawag

Duration

2021 - 2022